Context Engineering: Structuring Input for Better Results

Imagine you are a product manager prepping an AI demo for your team. In one scenario, you simply ask the AI to “summarize our user feedback”. The result? A generic, unfocused summary that barely helps. In another, you frame the prompt with context - “You are a product analyst. Here’s a set of raw user feedback. Summarize the top 3 themes and quote one user comment for each”. - and the AI delivers a concise, insightful report with quotes. Same AI, different result. What changed? The way you structed the input. This is the essence of context engineering: shaping what and how you feed information to a generative AI to dramatically influence its output.

Generative AI models like GPT-5, Gemini, Claude, etc. are incredibly powerful, but they are also highly sensitive to how you ask. As product managers (PMs) and tech leaders, treating the AI’s input “context” as a design surface can be a secret competitive advantage. In this article, we will explore how context windows and structured prompting (a.k.a. prompt engineering) impact output quality, and why mastering this skill is becoming crucial for AI-driven products. We will keep it strategic and practical - with real examples for ChatGPT, Notion AI and Duolingo. Let’s dive in.

What Exactly is a Context Window?

In simple terms, a context window is the amount of text (measured in units called tokens) an AI model can consider at once. You can think of it as the AI’s working memory or screen size for text. If you have used ChatGPT, you might have noticed it sometimes “forgets” details from very long conversations - that’s because you have exceeded its context window (older messages get dropped from what the model pays attention to). Current AI models have hard limits on this: for example, GPT-5 offers 196,000 to 400,000 tokens, and Anthropic’s Claude has recently expanded to 200,000 tokens (roughly 150,000 words). That means Claude can ingest about 500 pages of text in one go.

However, big isn’t always better. There’s a phenomenon researchers call “context rot”: as you stuff more and more into the context window, the model’s ability to accurately recall and use information can decrease. In other words, past a point, dumping everything into the prompt can backfire - the signal gets diluted with noise, and the model may lose focus. Just like a human with too much on their mind, an AI given a huge dump of text might struggle to pick out what matters. Plus, larger contexts mean higher costs and slower responses (processing thousands of tokens isn’t free or instantaneous). The key insight: the context window is finite and precious. Treat those tokens like a budget - invest them wisely on high-value information. This is where structured prompting comes in.

From Prompt Engineering to Context Engineering

If you have played with AI long enough, you have probably done some prompt engineering - crafting your query or instructions to get better results. “Prompt engineering” traditionally refers to writing and organizing the text prompt in a clever way to steer the AI’s output. This might include giving the AI a role (“You are a friendly tutor…”), adding example inputs/outputs, or phrasing the question specifically to nudge the answer you want.

Context engineering is the next level up - a broader perspective that has emerged as we build more complex AI applications. Instead of just wordsmithing a single prompt, context engineering asks: What information (and in what format) should we include across the entire context window to get the desired behaviour consistently? It’s a more holistic approach that factors in system instructions, conversation history, examples, and retrieved data - all the pieces that might go into that input text. Anthropic (the company behind Claude) describes context engineering as optimizing the configuration of all these tokens to reliably achieve an outcome. In their view, it’s a natural progression from prompt engineering.

For a Product Manager, the practical difference is scale and strategy. Prompt engineering might be figuring out the best wording for a one-off question. Context engineering is about designing the entire conversation or prompt template that your product will use repeatedly to drive an AI feature. It’s like the difference between writing a great one-liner versus designing a whole dialogue system.

Important Keywords

Context Window: The combined input + output capacity of an AI model - essentially how much text it can handle in one go. It’s measured in tokens, which are chunks of text (for rough comparison, 1 token ≈ 3/4th of a word). Models have limits like 32k, 100k, 200k or even 400k tokens. The window includes your prompts, any system instructions, recent conversation, plus the AI’s own response. If you exceed it, earlier text gets pushed out.

Token Limit: The maximum number of tokens the model can intake (and generate). Hitting the limit means either truncation or refusal. It forces trade-offs in how much info you can provide. Think of it as the character limit on a tweet, but for AI context.

Prompt Engineering: Crafting prompts (questions or instructions) strategically to guide the AI’s output. It can involve choosing the right words, providing step-by-step instructions, or giving examples, all to get an optimal result. It’s a bit art and bit science - knowing the model’s quirks and capabilities.

Prompt Scaffolding: A fancy term for structuring a prompt in stages or sections to handle complexity. Instead of asking one big, complicated question, you scaffold the interaction: break the task into steps or provide a series of prompts that build on each other. For example, you might first ask the AI to outline a strategy, then fill in details, then polish the result. Prompt scaffolding provides supportive steps for the AI, much like educational scaffolding helps students solve a complex problem one layer at a time. It’s especially useful when a task it is too complex to get right in a single shot.

Alright, with those basics covered, let’s see how structuring this context actually affects AI performance - and how some well-known products leverage it.

Why Structured Prompts yield Better Output

Large language models don’t truly “understand” meaning like humans do; they rely on patterns. So how you present information and instructions in the prompt hugely influences what pattern the model matches. A well-structured prompt is like giving the model a map and a compass. An unstructured prompt is more like dropping it in a wilderness and hoping for the best.

Here are a few ways structured input makes a difference:

Clarity and Reduced Ambiguity: By explicitly sectioning your prompt or using clear formatting, you tell the model what role each part of the text plays. For instance, if you label one part “Background info” and another “Instructions”, the model is less likely to confuse context with the task. Anthropic’s engineers actually recommend organizing prompts into distinct sections (e.g. <background>, <instructions>, <output format>) using markers like XML tags or Markdown headers. This kind of prompt template (a simple example of prompt scaffolding) helps the AI know what’s what. It’s similar to how a well-structured document with headers is easier to follow than a wall of text.

Stay within the “Attention Budget”: Remember that the AI has a fixed attention budget - it can’t give equal focus to every token if there are too many. A structured prompt prioritizes important information. If you front-load key instructions and keep irrelevant fluff out, you use the context window efficiently. As an example, OpenAI found that for very long prompts, repeating critical instructions at both the beginning and end of the prompt led to better adherence (the model is less likely to “forget” the directive in the middle of processing a lot of text). So, if you have pages of context, it may help to remind the AI of the goal at the start and again at the end.

Robustness to “Context Rot”: As mentioned, model performance can degrade as the prompt goes long (context rot). Structured prompting counteracts this by keeping the content relevant and digestible. Think of it as good information architecture for your AI: grouping related info, trimming extraneous bits, and sometimes summarizing past content. Techniques like compaction (summarizing earlier conversation once you near token limits) are essentially context engineering strategies to maintain coherence over long dialogues. In practice, if your product has a multi-turn chat, you might program it to summarize older messages once the convo gets too long - thus freeing space while preserving the gist.

Guided Reasoning: A clever prompt can guide an AI through a complex reasoning process. For example, you might instruct: “Let’s solve this step by step. First, outline the problem…then propose solutions…then evaluate each…” This stepwise prompt acts as a scaffold. It often yields more logical, thorough answers because the AI is effectively prompted to imitate a chain-of-thought. In training, these models saw many examples of step-by-step reasoning when the text included phrase like “Step 1:” etc., so giving a structured reasoning prompt cues the model to follow that pattern.

In short, structure imposes intentionality on the model’s otherwise free-whaling text generation. As a PM, you are essentially designing an information layout for an AI, akin to designing a UX for a user. Just as a good UI guide a user’s behaviour, a good prompt structure guides the AI’s behaviour.

Now let’s ground this in some real-world product examples to see how context engineering shows up in the wild.

Real-World Examples: Context Engineering in Action

ChatGPT: The Magic of Role and Format Prompts

OpenAI’s ChatGPT is the quintessential example of how a little prompt context goes a long way. If you just ask ChatGPT a vague question, you might get a decent answer. But give it more structured context - and it shines. For instance, users discovered that prepending a role or persona to the prompt can dramatically change the style and quality of the answer. Tell ChatGPT, “You are an expert lawyer. Explain this contract clause in plain English,” and the response will be more authoritative and simpler than if you just said, “Explain this clause”. The hidden secret is that ChatGPT itself is governed by an unseen system prompt that sets its friendly, helpful persona and certain rules. We as end-users can further engineer the prompt by adding instructions or examples.

Consider when you have a lot of info to supply (say, a long article you want summarized). If you paste the whole article and then at the very end say “TL; DR please", the model might focus more on the end instruction and give a decent summary. But best practice, as mentioned, is to repeat or front-load the instruction: “Summarize the following article. [Full text of article]. Now, given the above, produce a TL; DR summary”. By wrapping the content with clear ask at start and finish, you guide ChatGPT’s focus. Users of ChatGPT have also learned to format inputs with bullet points, code blocks, or other markup to influence the style of the output (the model often mirrors the format you use in the prompt). Essentially, ChatGPT taught a generation of PMs and users that how you ask matters - and that we are all, in a way, prompt engineers now.

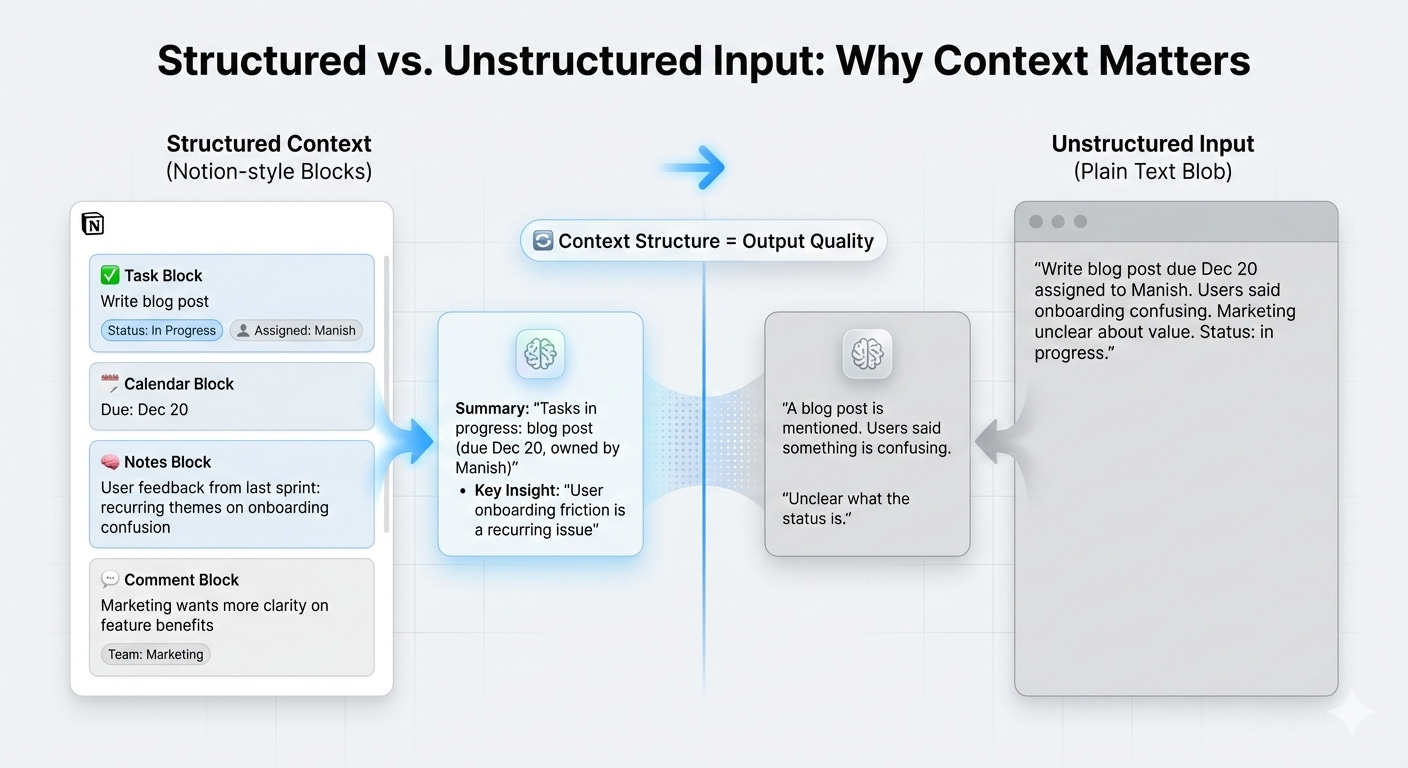

Notion AI: Leveraging Structured Workspace Context

Notion AI, an AI assistant woven into the Notion productivity app, benefits from the highly structured data that Notion contains. Notion’s product team has talked about their “block-based architecture” as an advantage for AI: every paragraph, to-do, or database entry in Notion isn’t just plain text, it’s a block with properties and relationships (like who assigned a task, due dates, hierarchy in docs, etc.). This means Notion AI can be given a rich, structured context rather than a raw text dump. As Notion’s AI lead Sarah Sachs explained, “Notion’s block-based architecture gives us something most tools lack: deeply structured context…That structure isn’t just for organization. It’s a foundation for more intelligent AI”. For example, when you ask Notion AI “What tasks are late and assigned to marketing?”, the system can actually understand the question in terms of the database structure (tasks with a Due Date before today, where Team is Marketing). The AI isn’t just doing keyword search; it’s operating on a graph of linked data, thanks to how the prompt and context are engineered.

Notion’s team also uses context engineering behind the scenes in crafting prompts for different features. They route different tasks to different AI models and carefully prompt each to play nicely with Notion’s data. Interestingly, Notion even has AI Data Specialists on the team - a hybrid QA/prompt-engineer role - who tweak and optimize prompts for each feature based on real user data. They evaluate where the AI might be going wrong and refine the instructions. This kind of continual prompt refinement is context engineering in practice: treating the prompting like a piece of the product that can be improved with feedback. The result is that Notion AI can draft a blog post for you, create meeting agendas, or generate project plans in a way that feels remarkably aware of your workspace context. It’s not magic - it’s thoughtful prompt and context design.

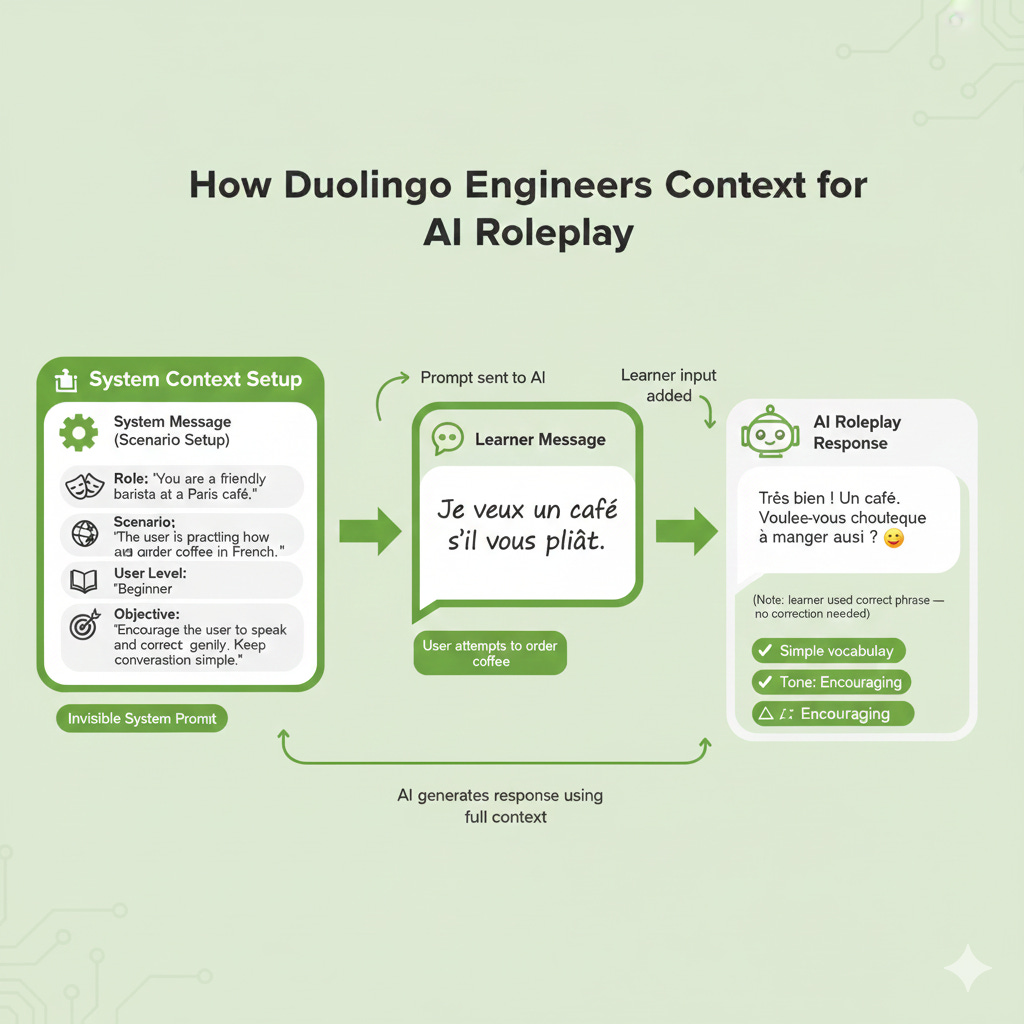

Duolingo: Guiding an AI Tutor with Scenario Context

Language learning app Duolingo made waves when it integrated GPT-4 to power its new “Explain My Answer” and “Roleplay” features. Getting an AI to act as a helpful tutor in a foreign language is a delicate dance - it needs the right persona, the right level of difficulty, and awareness of what the user knows so far. Duolingo’s PM and designers approached this by carefully engineering the AI’s context for each exercise. According to Duolingo, their experts “write the scenarios that learners see in Roleplay…[They make sure the initial prompt (e.g. ‘Talk about a vacation!’ or ‘Ask for directions’) is aligned with where the learner is in their course. Our experts also write the initial message in the chat and tell the model where to take the conversation”. In other words, they don’t just toss GPT-4 a generic “let’s chat about anything”. They set the scene and even provide the first turns of dialogue in many cases, to anchor the AI. By doing so, they constrain the AI to the context that’s pedagogically appropriate.

For the “Explain My Answer” feature (where the AI explains a learner’s mistake or confirms a correct answer), imagine the prompt structure needed. Likely, it includes the exercise question, the user’s answer, the correct answer, and then an instruction like: “Explain why the learner’s answer is correct/incorrect in a friendly tone and give a helpful tip”. Providing all that context in a structured format is crucial so that GPT-4’s explanation is accurate and relevant. If the prompt only said, “Explain this,” the model might hallucinate or give a generic response. Duolingo reports that they spent months refining these prompts with OpenAI’s help, and they have humans reviewing outputs for quality and tone. The payoff is a feature where the AI feels like a knowledgeable, encouraging tutor - because the context fed to it frames it as one.

These examples show that whether it’s an end-user manually tweaking a ChatGPT prompt, or a PM designing a prompt template in a product, the structure and content of the input radically shape the output. Great AI products often succeed not just because of the underlying model, but because of the unseen context engineering that guides the model’s behaviour.

Making Context Engineering your Competitive Advantage

So why should PMs and product leaders deeply care about this? Because as AI becomes a commodity - many apps can call an OpenAI or Anthropic API - the differentiator will be how you use it. Context engineering is part of your product’s secret sauce. It’s analogous to UX design: everyone has the same buttons and menus to work with in a platform, but how you arrange them defines the experience. With AI, everyone might have access to GPT-5 or similar models, but how you construct the prompts and feed context will define the user experience and results.

Here are a few strategic reasons mastering context engineering gives you an edge:

Better Outcomes without Tuning the Model: Fine-tuning or training custom models is expensive and time-consuming. Often, you can get 80% of the way to a specialized capability just by prompt and context tweaks. For instance, rather than wait for a bigger model or expensive fine-tune to handle more knowledge, you can use retrieval and summarization to feed the model just the info it needs (common in AI search/chat products). Or if the tone is off, adjust the prompt’s working or add an example of the desired tone. Prompt crafting is much faster to iterate than model weights. A team that’s good at this can ship effective AI features quicker.

Control and Consistency: As PMs, we worry about consistency and reliability. Prompting can feel stochastic (and it is), but a well-structured context can enforce consistency. Think of it as writing policy for the AI - you can include rules or style guides in the prompt. For example, if your brand voice is playful, you can include “Use a playful tone and occasional humor” in the prompt instructions for all outputs. If there are things the AI should never do (for safety or brand reasons), those go in the system prompt or instructions. Context engineering allows you to bake product requirements directly into the AI’s operating context. It won’t be foolproof, but it sets strong default behaviour. Many organizations create prompt templates or libraries for this reason, ensuring every AI interaction starts with a solid baseline of instructions.

Handling Complexity Through Decomposition: When your product needs the AI to do something complex (say, perform multi-step calculations, or parse a complex document and then answer questions), context engineering provides ways to break it down. You might do this behind the scenes with prompt chaining - first prompt to get intermediate results, then feed those into a second prompt, etc. This kind of orchestrated prompting (a form of scaffolding) can turn a single big hairy task into a series of manageable AI tasks. Teams that figure out the right sequence (often through lots of trial and error) can achieve feats that a one-shot prompt wouldn’t. It’s almost like designing an algorithm, but in natural language steps that the AI will follow.

Resource Efficiency: With ever-larger context windows available, it might be tempting to shove whole databases into a prompt. But aside from quality issues (we saw context rot and confusion), it’s also expensive - many AI APIs charge by token. One analysis pointed out that blindly using a giant context can “quickly spiral” costs, whereas a curated, targeted prompt versus a brute-force context can differ by orders of magnitude in expense. Smart context usage (e.g. summarizing, retrieving only relevant chunks, etc.) can achieve similar results far more cheaply. In a world where usage might explode, containing cost while maintaining quality is a competitive advantage for your margins (or for users, if you pass cost to them). PMs who internalize the token-budget mindset will design features that are not just cool but also cost-efficient.

Finally, there’s a less tangible but important benefit: innovative product thinking. When you start viewing AI behaviour as something you can program through language and context, it opens up creative possibilities. You might envision new features that come from clever prompt design. Perhaps your app could automatically rephrase a user’s request to be a better prompt (like a built-in prompt helper), or maybe you realize you can combine two models - one to generate, another to critique (the “LLM-as-a-judge” approach). These ideas arise once you see context as a toolbox, not a black box. In a sense, we’re all becoming AI DJs, mixing context tracks to get the desired beat from the model.

Conclusion: Designing the AI Input is the New UX

The rise of generative AI has introduced a new design frontier: the design of the AI’s inputs. Context engineering is where technical understanding meets product intuition. It’s about being deliberate with what you feed the model - much like being deliberate with what you feed your users on a screen. For AI PMs building their personal brand (and products), championing this practice can set you apart as someone who doesn’t just use AI, but orchestrates it.

Actionable takeaways: As you work on AI features, try treating the prompt and context as first-class product elements. Document them, prototype changes, and AB test different prompt versions if possible. Here are a few ideas to get started:

Audit your prompts: What hidden instructions or assumptions does your feature’s AI use? Write them down. Are they aligned with the experience you want? If not, iterate on them like you would on UI copy.

Establish a prompt template: Create a structured template for important AI tasks (e.g. always start with a brief context, then the instruction, then an example). Use consistent section headers or formatting. See if output quality improves with a more organized prompt.

Mind the tokens: Keep an eye on how close you get to token limits. If you are near the edge, is all the information truly necessary? Try removing or compressing less relevant bits and observe if the output becomes more focused. Experiment with summarizing older conversation history when building chat features - does the model still do well?

Ask “what’s missing?”: If the AI output isn’t great, consider what extra context it might need. Does it have the key facts? The style guidelines? Perhaps giving a quick example in the prompt would help. Conversely, if it’s going off track, maybe too much context is confusing it - try simplifying.

Engage and reflect: Next time you see an impressive AI output, ask yourself: What might the prompt or context have looked like behind the scenes? You will start recognizing the fingerprints of good context engineering in the products you use. And as you implement these ideas, I would love to hear: What results are you seeing? has structuring your prompts differently made a noticeable difference in your AI’s performance? Feel free to share your experience or questions - after all, we are all learning how to better ‘talk” to our AIs. In this new era, those who master that dialogue will lead the way.

Enjoyed this deep dive? Subscribe to my Substack newsletter for more practical AI insights tailored to product management. If you found the ideas here useful, go ahead and share this article with colleagues or on LinkedIn so more PMs can level-up their AI game. Have your AI product lifecycle story or question? Leave a comment - I would love to hear how you are using prompt engineering in your workflow or help with any challenges you are facing. Together, let’s continue to learn how to get better AI outputs and build amazing products!

Thank you for reading. Happy innovating!